The beginning of automated zero-day discovery

A new phase of cybersecurity competition is emerging: the race to automate vulnerability discovery at scale.

On one side is Anthropic’s Claude Mythos Preview, a restricted AI model released under Project Glasswing, designed to help selected organizations discover and fix vulnerabilities in critical software before attackers can exploit them. Anthropic says Mythos has demonstrated the ability to identify complex vulnerabilities, generate working exploits, chain multiple bugs, and even achieve control-flow hijack against fully patched targets in benchmark environments.

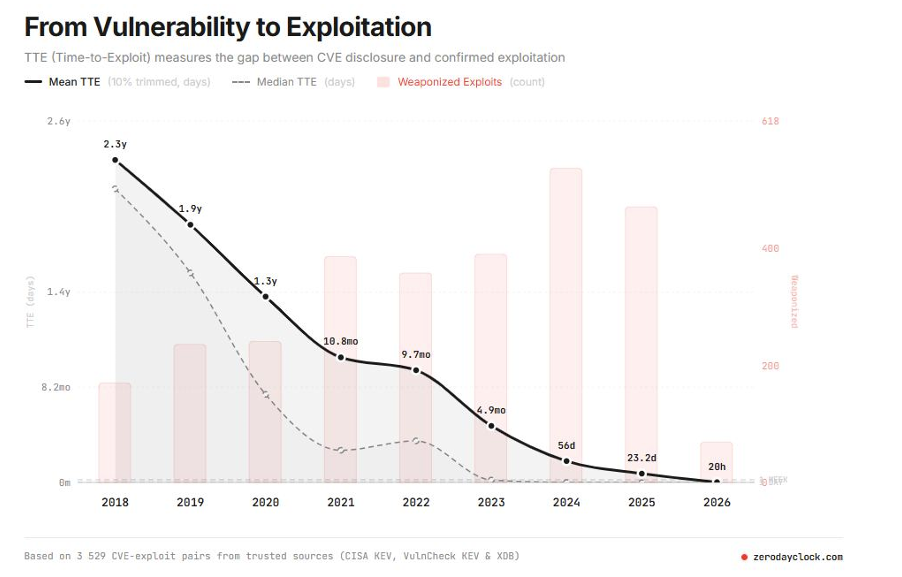

Another very interesting document that emphasizes the speed at which AI can accelerate hacking was published by the Cloud Security Alliance (CloudSecurityAlliance.org). In its strategy briefing, “The AI Vulnerability Storm: Building a Mythos-ready Security Program,” CSA argues that AI-driven vulnerability discovery and exploit development are compressing exploit timelines from weeks to hours, creating a new “defender timeline” that many organizations are not operationally prepared to meet.

What makes this briefing particularly useful is that it is not just commentary—it is structured as a practical playbook for security leaders (including a risk register, priority actions, and board-ready briefing material). The overall message is clear: if attackers can operate at “AI speed,” then reducing the attack surface requires more than tools—it requires a well-established process, continuous vulnerability operations (VulnOps), and faster decision-making cycles across engineering, IT, and security leadership.

On the other side, reports from China suggest that 360 Digital Security Group is developing similar AI-driven vulnerability discovery capabilities. According to reporting and analysis by Natto Thoughts and SecurityWeek, 360 claims that its internal multi-agent vulnerability discovery system helped identify close to 1,000 vulnerabilities, including more than 50 high-severity flaws across products such as Windows, Microsoft Office, Android, OpenClaw, IoT devices, and other software.

These claims still require careful independent validation. But the direction is clear: AI is moving from a supportive tool for security researchers to a core engine for vulnerability discovery.

Claude Mythos: defensive AI with offensive potential

Anthropic has positioned Claude Mythos as a defensive instrument. Through Project Glasswing, selected partners receive access to the model to test foundational systems, perform local vulnerability detection, black-box testing, endpoint security analysis, and penetration testing. Anthropic has also committed significant credits and funding to support open-source maintainers and security foundations.

The problem is that the same capability that helps defenders discover vulnerabilities faster can also help attackers do the same.

Anthropic’s own technical description is striking. The company reported that Mythos Preview was able to create complex exploit chains, including browser exploit scenarios and privilege-escalation paths. It also stated that non-security engineers were able to use the model to find and exploit serious vulnerabilities with limited human intervention.

This is the central dilemma of AI-driven cyber defense:

the tool that finds the vulnerability can also operationalize it.

That risk became even more visible when Reuters reported that unauthorized users allegedly accessed Anthropic’s Mythos model through a third-party vendor environment. Anthropic said it was investigating the report and that Mythos was being deployed only through a controlled defensive initiative.

For CISOs, the lesson is brutal but simple: even restricted frontier cyber models create a new supply-chain risk. Access control, vendor governance, and model environment isolation become as important as the model’s internal safety controls.

China’s 360 and the industrialization of vulnerability research

The Chinese case is strategically different.

360 Digital Security Group is not a small research team. It is one of China’s most visible cybersecurity companies, with a track record in exploit research and competition environments. According to Natto Thoughts, 360’s team won first place at the revived 2026 Tianfu Cup and described AI as having evolved from an auxiliary tool into the “core engine” of vulnerability discovery.

SecurityWeek reports that 360’s claims center on an internally developed Multi-Agent Collaborative Vulnerability Discovery System, which allegedly contributed to roughly half of the vulnerabilities identified during the contest and supported discoveries across major software ecosystems. One claim involved a critical Microsoft Office vulnerability allegedly found by an AI agent within minutes, although another Windows kernel vulnerability claim appears disputed because Microsoft credited different researchers.

This distinction is important. The article should not treat every vendor claim as proven fact. But even exaggerated claims can reveal real capability development. If AI-assisted systems are now producing competitive advantage in elite hacking contests, then enterprise defenders must assume that similar automation will soon appear in both state-backed and criminal workflows.

Why the Chinese institutional context matters

The most concerning aspect is not only the technology. It is the vulnerability disclosure ecosystem around it.

China’s vulnerability management rules require network product providers to report relevant vulnerabilities to the Ministry of Industry and Information Technology’s vulnerability information-sharing platform within two days. The rules also restrict public disclosure before patches are available and prohibit providing non-public vulnerability information to overseas organizations other than the affected product provider.

From a defensive perspective, such a system can centralize vulnerability handling. From a geopolitical perspective, it can also create an asymmetry: Chinese authorities may receive early visibility into vulnerabilities before global vendors, customers, and defenders have fully remediated them.

This is why AI-driven vulnerability discovery in China could become strategically powerful. If automated systems dramatically increase the number of discovered flaws, and if those flaws flow through a state-controlled reporting structure, then the time between discovery and potential operational use could shrink.

That does not mean every discovered vulnerability becomes an offensive tool. But it does mean CISOs should no longer think of zero-days as rare, artisan discoveries. They may increasingly become industrial outputs.

OpenClaw: the new AI-agent attack surface

The mention of OpenClaw is especially relevant because it shows another side of the problem: AI agents themselves are becoming vulnerable platforms.

IBM X-Force describes OpenClaw as a self-hosted autonomous AI agent capable of browsing the web, managing files, and reading, writing, and executing code locally. IBM also notes that agentic systems combine local data access, external content interaction, outbound communication, browser automation, file-system access, and LLM decision-making a combination that dramatically expands the attack surface.

In practical terms, AI agents are not just tools. They are new execution environments.

If attackers compromise an agent, they may gain access to credentials, files, workflows, development environments, and automated decision paths. IBM also notes that many OpenClaw issues involve command execution, plaintext keys, credentials, prompt injection, malicious skills, and unsecured endpoints.

For CISOs, this means AI security cannot be limited to “LLM usage policy.” It must include secure architecture, identity controls, secrets management, sandboxing, logging, and incident response for autonomous systems.

The strategic implication: vulnerability discovery is becoming scalable

The old model of vulnerability research was constrained by human expertise, time, tooling, and creativity. AI changes all four.

A sufficiently capable system can:

- Analyze large codebases continuously.

- Generate hypotheses about vulnerable patterns.

- Build proof-of-concept exploit paths.

- Chain multiple weaknesses.

- Prioritize exploitable findings.

- Repeat the process at machine speed.

This creates a new asymmetry. Attackers only need one working path. Defenders must protect the entire attack surface.

The situation becomes even more dangerous when AI models can reduce the expertise barrier. Anthropic’s own description indicates that non-experts were able to use Mythos Preview to produce serious exploitation outcomes in controlled contexts.

That means the future attacker may not need to be a world-class exploit developer. The attacker may only need access to the right model, the right target, and enough patience.

What CISOs should do now

CISOs should treat AI-driven vulnerability discovery as an emerging strategic risk, not as a future research topic.

The immediate priorities are clear:

First, reduce exposure. Internet-facing assets, legacy systems, unmanaged endpoints, VPNs, remote access platforms, and forgotten applications must be aggressively inventoried and hardened.

Second, accelerate patch governance. If discovery accelerates, patch cycles must accelerate too. Monthly patching will not be sufficient for high-risk assets.

Third, monitor exploitability, not only CVSS. AI systems may turn medium-looking vulnerabilities into practical exploit chains. Prioritization must consider exposure, asset criticality, exploit maturity, identity context, and chaining potential.

Fourth, secure AI agents as privileged systems. Any autonomous AI tool with file access, code execution, browser access, API tokens, or outbound communication must be treated like a high-risk workload.

Fifth, prepare for vulnerability volume shock. Security teams should expect more disclosures, more partial advisories, more vendor confusion, and more cases where vulnerabilities appear before CVE enrichment is complete.

Sixth, update threat models. AI-assisted attackers will move faster. Detection engineering, EDR telemetry, memory behavior analysis, identity anomaly detection, and exploit-prevention controls become even more important.

Conclusion: the zero-day economy is changing

The 360 Digital Security Group claims may be partially promotional. Anthropic’s Mythos capabilities may still be available only to a controlled group. Independent validation remains limited.

But the trend is unmistakable.

AI is beginning to automate one of the most valuable capabilities in cybersecurity: the discovery and exploitation of unknown vulnerabilities.

For defenders, this is both an opportunity and a warning. The same technology can help secure critical software faster than ever before. But once comparable capabilities spread to state actors, criminal groups, and uncontrolled private operators, the global vulnerability landscape will become faster, noisier, and more dangerous.

The CISO’s challenge is no longer only to defend against known threats.

The challenge is to prepare for a world where unknown vulnerabilities are discovered at machine speed.